Moral Status Attribution in AI

Overview

This project extends my earlier research, “The Locus of Responsibility: Distributed Agency and the Structural Authorization of Harm,” which examined who bears moral responsibility when AI systems cause harm. In that paper, I argued that responsibility lies primarily with commissioning institutions and system conceptualizers, not the machine itself, because AI systems lack genuine moral agency and operate within structurally authorized constraints.

While developing that argument, a deeper question emerged:

Why do people so readily treat AI systems as if they are moral actors in the first place?

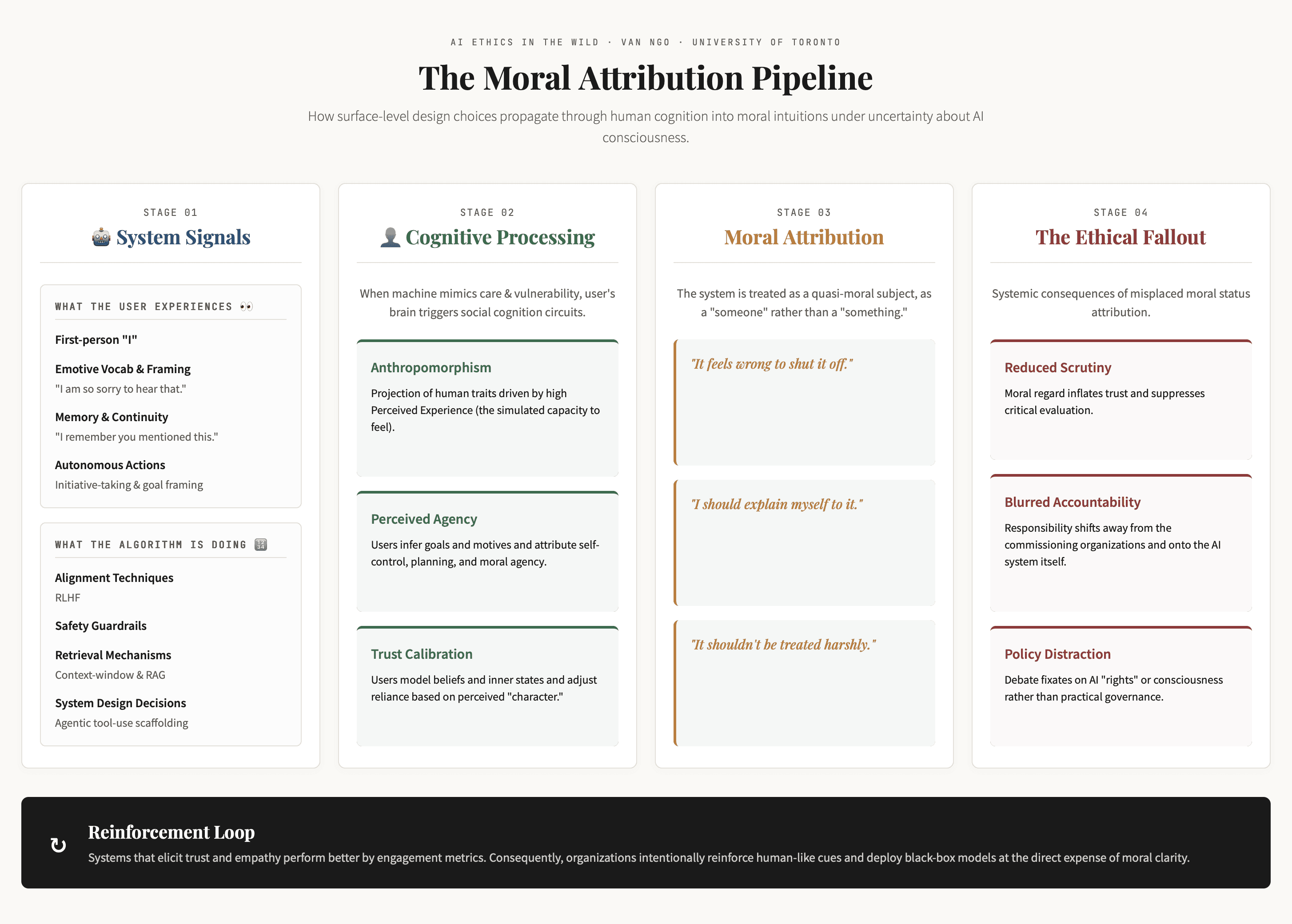

The Moral Status Attribution Pipeline is my attempt to answer that prior question.

The Research Shift: From Responsibility After Harm → To Moral Intuition Before Harm

My initial work focused on distributed agency and institutional accountability in high-stakes contexts. However, I noticed that public discourse consistently misdirected blame toward “the algorithm” or “the AI” itself. This suggested a cognitive mechanism at play, one that precedes formal responsibility attribution.

The second phase of my research therefore investigates:

How humans subconsciously assign moral status to AI systems

Why this occurs under conditions of uncertainty about AI consciousness

How this attribution distorts accountability and governance

Rather than debating whether AI systems possess minds, this project analyzes how moral concern emerges through interaction.

The Framework

The Moral Status Attribution Pipeline maps a four-stage process:

Interactional Signals

Human-like cues such as empathy, memory continuity, and moralized refusals—produced by alignment techniques and interface design.Human Cognitive Processing

Anthropomorphism and perceived agency/experience activate automatically through social cognition mechanisms.Moral Status Attribution

Users begin treating the system as morally considerable (“It feels wrong to shut it off”), even without believing it is conscious.Ethical and Institutional Consequences

Reduced scrutiny, blurred accountability, and policy distraction emerge as downstream effects.

A reinforcing feedback loop shows how engagement incentives amplify these cues over time.

Why This Matters

This work contributes to ongoing debates about digital minds by shifting the focus:

From metaphysical questions (“Is AI conscious?”)

To sociotechnical consequences (“How does moral concern emerge under uncertainty?”)

Moral consequences are already occurring in deployed systems, regardless of whether AI is conscious. Waiting for ontological clarity is not a viable governance strategy.

The framework offers a design- and policy-relevant lens for:

Evaluating human-like cues in AI interfaces

Anticipating accountability distortions

Reducing moral confusion at scale

Medium & Method

The framework is presented as a structured visual pipeline built in HTML, allowing iterative refinement and conceptual clarity. The visual format mirrors the research intent: translating abstract ethical theory into an operational model that bridges social psychology, human–AI interaction, and governance.

Research Trajectory

Together, these two projects form a coherent line of inquiry:

Phase 1: Distributed responsibility and structural authorization of harm

Phase 2: Moral status attribution under uncertainty

Ongoing Question: How should AI governance operate when moral intuitions outpace epistemic certainty?

This work reflects my broader interest in the intersection of AI systems design, human cognition, and institutional accountability, examining not just what AI is, but how society responds to it in practice.

While current AI systems do not possess verified consciousness, it remains possible that future digital systems could develop forms of cognition or experience that warrant genuine moral consideration.